Modern CPU Architecture 2: Microarchitecture

In the last article we talked about the modern CPU architecture. We discussed what a CPU was, a brief history of the CPU, we explained the concept of computing abstraction layers, and Instruction Set Architectures. The ISA is what we normally refer to as the architecture of the CPU.

Today we will delve into what the microarchitecture of the CPU is made up of. In other words how exactly are ISAs implemented, what are it’s building blocks and how does it operate. This is an in-depth explanation.

Key Building Blocks in a CPU’s ISA

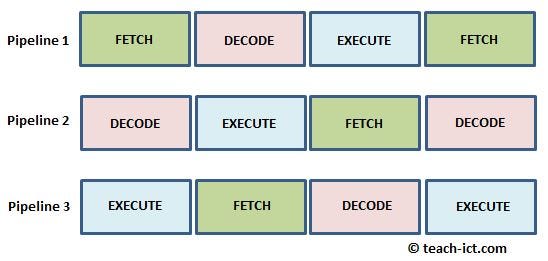

A microarchitecture is an implementation of an Instruction Set Architecture. The microarchitecture operates on the four step cycle:

- The first step “Fetch” is to extract information from memory so the CPU knows exactly what the program wants executed.

- The next step “Decode” requires that the information is broken down into chunks of bits of data(native operations). At this stage there is a creation of multiple internal operations as a result of chunks of instructions being broken down.

- Once instructions have been decoded, the CPU needs to execute them, that brings us to the next step “Execute”. There are numerous types of execution operations, like arithmetic operations(add, multiply, divide etc.), Boolean operations(AND, NOT, OR, XOR etc.), data comparison and decision operations(chooses where next to go in the code), operation as such are known as “branches” as they can steer code to different places. The execute stage of a CPU varies depending on the ISA, as many CPU with more sophisticated ISAs can perform much more operations.

- Finally the CPU stores the results, sometimes these results are stored locally in Registers, or Memory, this is known as the Write back step.

These operations make up the fundamental building blocks of a CPU, when put together they are referred to as a CPUs “Pipeline”

Pipeline Depth

Now that we have described what a pipeline looks like, what do modern microprocessors look like? Over the years the average number of pipeline stages have grown. A pipeline is similar to an assembly line. The more stages are added, the less is done at each individual stage. The more pipeline stages you have the faster each stage can run and more stages are being done in parallel. A modern microprocessor has about 15–20 stages.

The Fetch and Decode cycle typically have 6–10 stages, collectively these are called the frontend of the microprocessor. The Execute and Write back have also grown roughly into 6 to 10 stages. These are called the backend of the microprocessor. A CPU’s pipeline is synchronous, and what that means is each pipeline is controlled by a clock signal, and each data goes from one pipeline stage to the next as a CPU clock completes a cycle. The number of stages partially determines what the peak frequency of the CPU is. Modern CPUs can run up to 5GHz, the amount of logic in these stages determines how fast the stages or clock can operate. If a CPU runs at 5GHz this implies each stage need to run and complete at 5billionths of a second.

Speculation

If you recall the basic pipeline illustrated above, at the beginning we fetch the instructions and towards the end we execute these instructions. Some of these instructions are known as branches . This represents a decision point or “fork in the road” like an exit on a highway. Do we want to keep going or exit now and follow a different path? When a branch is being executed, you are making that decision. As the pipeline grows you get farther and farther away from the answer of which path to take. When the branch says to take a different path we need to tell the beginning of the pipeline to redirect to a different instruction. The work that was in progress is thrown away.

This is both bad for performance and as well as for power, since we have spent time executing instructions that were not needed for the program’s execution. We could avoid speculation by simply stopping every time we saw a branch and just wait for it to execute and tell us what path in the code to go. This would be safe, but will be very slow. However there lots of branches in most codes, that implies a lot of time spent waiting.

As pipelines get longer, the penalty for guessing wrong gets worse. Fetch becomes further away from execute, which means it takes us much stages to realize that we are executing on a wrong path. To remedy this, microprocessors invest heavily in design to make accurate predictions at the beginning of the pipeline. We call this the art of branch prediction.

When we see that we have gone down the wrong path, we can update or refine the prediction with what the right path was. Then the next time we see that address the branch predictor can tell us to go to a different address. Modern CPU architectures can often predict branches with a near perfect accuracy that makes them seem almost clairvoyant. When a microprocessor executes newer instructions than a branch without knowing if that branch is taken or not , it is referred to as Speculative Execution.

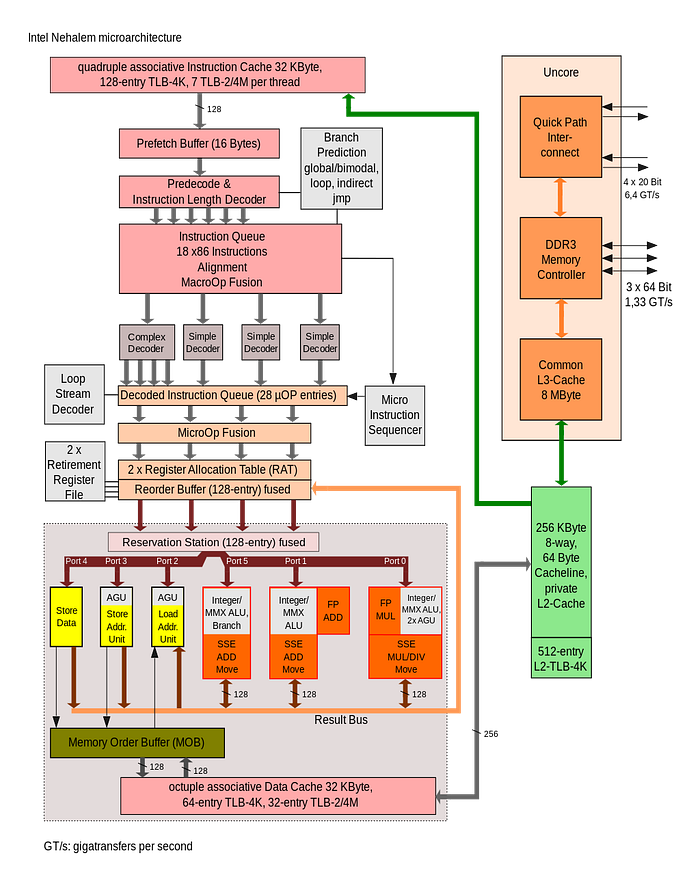

Front End: Predict and Fetch

Branch predictors have become incredibly complex in order to improve their accuracy while still able to steer fetching of instructions at high frequencies. Branch predictors today can often times record, and understand, learn past history of hundreds, thousands of branches before them in order to make a single prediction of the next branch and where it is going. The sophistication of modern day branch predictors is really kind of a precursor if one might think in terms of artificial intelligence, in terms of learning from past behavior and how the future will behave. They’ve become so accurate, they are now in charge of deciding which address to fetch next, even if the prediction ends up being “keep calm and carry on”.

Now CPU frequencies have increased much faster than memory speeds, this means it takes longer to fetch data from memory, and to help offset the long round trip time to main memory and back, we keep local copies of main memory internally in structures known as Caches . The frontend has an instruction cache so that it can read instructions in just one to two cycles instead of the hundreds that may take to go to main memory. For power and performance optimization, many adjacent instructions are fetched at the same time, which are then handed off to the decoders. If the instruction cache does not have the data, then the data is requested from the memory’s subsystem. The main goal of the frontend of a pipeline is to ensure there are always enough instructions for the backend to execute and to avoid the idle time spent waiting for instruction bytes from memory.

Front End: Decode

The second half of the frontend is where program instructions are decoded into the microarchitectures internal operations which are called micro-operations. This is the strongest connection between the ISA and the microarchitecture. ISA instructions often include additional bits of data that gives the CPU more information relevant to the operation, which the CPU uses to decode and execute the instruction in an efficient way according to it’s microarchitecture. Microarchitectures are typically built so that most instructions map directly into a single micro-operation, but not all. This helps us to simplify the backend of the pipeline . However there are often some instructions which are more complex and may require the generation of multiple micro-operations. The frontend is always looking at how to decode and prepare those instructions to be executed efficiently. Some microarchitectures decode cache and save these micro-operations for the next time they need to be decoded. This saves the energy require to decode them and improves performance when one to many expansion is common. After instructions are decoded or read from the decode cache, they are then passed to the backend of the pipeline.

CPU Backend: To be Continued…